Tech executives have spent the last two years making sweeping claims about what AI will mean for human labor. In a December 2025 study by the Harvard Business Review, 90 percent of executives surveyed reported seeing a “moderate or a great deal of value from AI.” But public statements from prominent industry leaders reveal that there is no unified technocratic perspective.

There are those who see its reduction of human labor as a path toward market efficiency: Former OpenAI CTO Mira Murati suggested AI would eliminate jobs that “shouldn’t have been there in the first place”; CEO of OpenAI Sam Altman has at times engaged in philosophical discussions about what it means to have a meaningful job and the future, with AI wiping out entire industries and categories of work.

There are also those who clash: Palantir CEO Alex Karp has questioned the value of training in the humanities in the AI era, while Anthropic co-founder Daniela Amodei has argued that humanistic judgment and interpretation may become even more important. Together, these claims do not offer a coherent labor vision. They expose how unstable elite thinking is on the future of human work.

Perhaps counterintuitively, technocrats are not the most well-informed on AI labor, nor are they the most affected. In fact, the most relevant perspective and expertise is found outside the boardroom. It is American workers who are most preoccupied with how AI will shape labor, now wondering whether their skills will matter, their jobs will remain stable, and if adaptation is possible on fair terms. If we want to understand what AI will do to society, we should start with workers: After all, the workplace is where technological power first becomes a lived reality.

The policy responses to these anxieties tend to swing between two extremes: either halting innovation altogether (a Luddite approach), or accepting it and compensating workers for their losses (a technophile approach). Both approaches miss a more practical point: Workers are not only the people most affected by AI deployment, but also a critical source of information about how jobs actually work. Excluding them leads to worse decisions, weaker implementation, and more avoidable disruption. A more effective agenda would center labor through four practical interventions: clearer certification and recertification pathways, performance standards for workplace AI, co-training and co-design with workers, and prior communication about AI rollout and capability upgrades. In AI-dominated workplaces, fair terms could look like transparency, non-discrimination provisions, and collective bargaining power to improve workers’ wages, time, surveillance, evaluation, and job security, along with robust rights to information about the technology and contestation for the use.

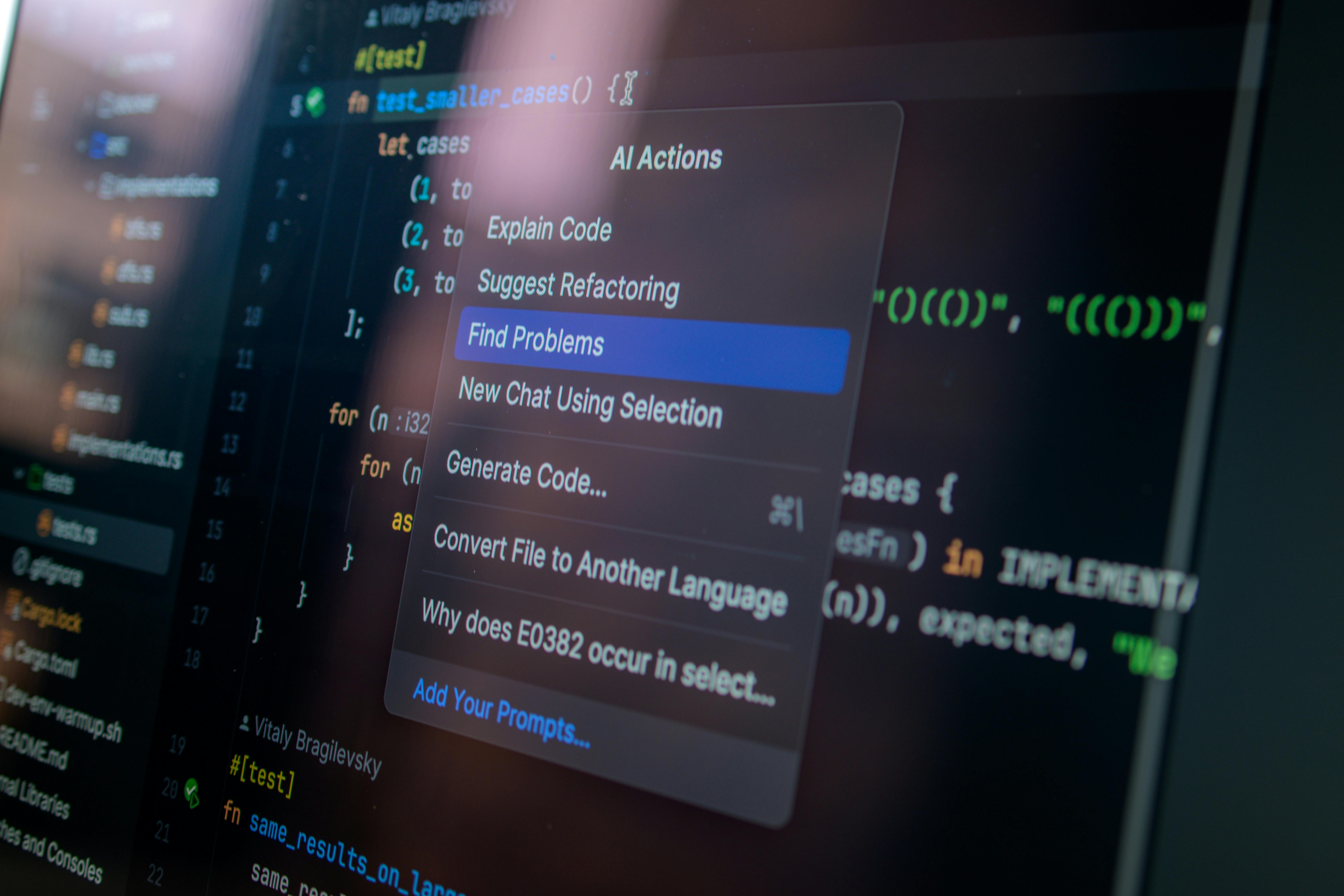

While generative AI attracts the most attention, with tools like ChatGPT or Claude (which can create new text, code, image, or audio content) enjoying impressive popularity and broad appeal, it is only one part of a broader transition to AI-dominated workplaces. Employers are deploying several distinct systems, including generative, analytical, deterministic, and increasingly agentic AI systems. These tools do not affect labor in the same way: Some generate content, others create surveillance mechanisms for the workplace, and some can carry out multi-step actions with limited oversight. These distinctions matter for policy because the risks and penetration of these tools are not uniform across sectors. Workers are often the first to see where these systems mismeasure performance, harden bad rules, erode skill, or quietly transfer authority from employees and supervisors to software. Some of these tools may augment rather than replace workers, but all of them raise the danger of deskilling and loss of workers’ agency.

Anxiety that AI will replace human labor has arguably already come to fruition: 1.28 million fewer people were hired in 2025 than in 2024. 2025 saw 55,000 layoffs directly pointed to AI, and 40 percent of workers in 2026 reported anxiety over AI-related job loss. 2025 research from MIT points to the notion that 11.7 percent of the current US labor market could be replaced by AI with its current capabilities.

Elite workers have been quick to purport their own solutions to the AI-induced labor crisis, but these policies ultimately fall short. In an interview at Davos, Jamie Dimon, CEO of JPMorgan, came out in support of local governments banning or controlling AI-related job layoffs. However, blanket layoff bans would likely stifle productivity gains from AI, dulling innovation and setting the United States behind its geopolitical competitors. This could also cause firms to simply adopt AI covertly and with less transparency.

In the world of business, Dimon’s perspective is an anomaly. Many tech leaders have instead supported a version of universal basic income (UBI) designed to compensate people after job disruption, letting the technological innovation gains continue. Sam Altman has funded the most comprehensive UBI pilot: The Unconditional Cash Study, which pulls from a $60 million pool to give an unconditional $1,000 per month for three years to “some of the poorest Americans.” While this study was not specifically directed at people affected by AI, Altman has used it as a foreshadowed solution for increased inequality and job loss from AI. Meta CEO Mark Zuckerberg, Tesla CEO Elon Musk, AI pioneer Geoffrey Hinton, and others have all called UBI a necessity amidst AI integration on the enterprise level. But UBI alone also fails. It ignores employment’s role in cultivating human capital and social relationships. Monetary compensation cannot make up for these losses in soft skills.

Policies like UBI flatten the complex needs of workers affected by AI. To ensure that worker losses are holistically compensated, systems must allow workers to influence and lead the AI-transition in real time. Income support can soften the immediate blow of AI disruption, but by itself it does little to preserve the skills, judgment, and adaptability that allow workers to remain active participants in a changing economy.

First, policymakers and employers need clearer certification and recertification pathways for workers navigating AI-driven change. “AI readiness” has become a buzzword, but does anyone actually know what it means? As AI systems quickly evolve, many workers are left guessing which new skills are actually valuable or transferable, so creating a universal certification framework would make clear which AI tools workers should learn to use—and how. Certification would create recognized programs that verify competence in these agreed-upon skills; meanwhile, recertification programs would give workers updated training when those tools, workflows, or occupational requirements materially change. Government agencies will certainly play critical roles in judging worthy certification programs and centers. Programs like Ohio’s reimbursement model support people completing various reskilling certifications, but the model also provides an enormous list of possible programs with little guidance on which are best. Without strict transparency and quality standards, certification systems risk becoming another confusing marketplace rather than a tool of empowerment.

Second, because these systems often fall short of what employers and vendors promise, workers need clearer performance standards for workplace AI. Research shows that exposure to AI does not automatically mean displacement, and adoption does not guarantee productivity. AI has known limitations—such as hallucinations, jailbreaking of models, and cybersecurity concerns. Thus, enterprises cannot be dismissive of workers who report these shortcomings— they are often the first to see where these tools fail in practice, so their feedback should be treated as evidence, not resistance. Performance evaluation should therefore include (and even center) worker reporting.

Third, to achieve maximum productivity, AI adoption should rely on co-training and co-design with workers and their representatives. Carnegie Mellon’s Block Center has led many research projects that have illustrated how important friendly employers are for effective tech implementation. The center’s collaboration with hospitality workers’ trade union UNITE HERE successfully emphasized co-development of policies and tools with both workers and management in place of top-down imposition. Additionally, their research clearly shows that impositions of analytical AI tools, like schedulers, does not actually lead to best practices and workers’ satisfaction—rather, co-designing tools with employees and the support of friendly employers was key to AI-tool adoption.

Finally, workers deserve advanced communications about AI adoption rather than being notified only when layoffs are imminent. While existing US labor law already mandates advanced notification of layoffs through the WARN Act, it is technology‑neutral and does not enforce a structured transition to automation or AI-powered layoffs. In the 2025-2026 legislative session, New York state senator Rachel May (D, WF) proposed NY S8589A, a bill that “requires covered employers to provide notice to certain affected employees prior to any technological displacement; requires reporting; [and] requires a workforce transition period.” The proposal is a powerful blueprint for legislation in other states, as it directly targets the need for technological transparency and requires a transition period with employers’ obligation to mitigate and manage tech‑driven job loss. However, when layoffs are on the way, it is already a late notice, which even more advanced transparency could have prevented. It is important to expand the momentum of this legislation to notifying workers prior to AI adoption and capability upgrades.

There is real anxiety over job displacement in the AI age, intensified by the lack of preparedness and transparency. By centering workers as active policy participants, the four solutions proposed above build a durable path toward a worker-centered AI-adopting workplace. Certification pathways, performance standards, co-training and co-designing initiatives, and advanced communication are all reasonable and implementable policy solutions. Realistic and worker-empowering policy offers a middle path that can uplift labor while delivering productivity gains for companies.